Gemini 3.1 Flash-Lite Review 2026: Fast, Cheap-ish, and Suspiciously Close to 2.5 Flash

Google just shipped Gemini 3.1 Flash-Lite. 363 tokens per second, 1M context window, pricing that undercuts Gemini 2.5 Flash. Sounds like the new budget king, right? Not so fast. Put the pricing side by side and Flash-Lite isn't the dramatic cost reduction the press releases want you to believe. It's a speed upgrade with a modest price trim.

I track LLM pricing data on this site, so when Google drops a new budget model, I pull the numbers apart.

TL;DR#

- Speed king: 363 tok/s output speed — 45% faster than Gemini 2.5 Flash (249 tok/s), 2.5x faster time-to-first-token

- Pricing reality: $0.25/1M input, $1.50/1M output. That's 17% cheaper input and 40% cheaper output than 2.5 Flash — real savings, but not a new price tier

- Benchmarks punch up: GPQA Diamond 86.9%, LiveCodeBench 72.0%, Elo 1432 — competitive with models costing 4-10x more

- Bottom line: Flash-Lite is a speed play, not a budget play. If latency matters, switch. If you're optimizing purely on cost, DeepSeek V3.2 is still cheaper (barely)

What Is Gemini 3.1 Flash-Lite?#

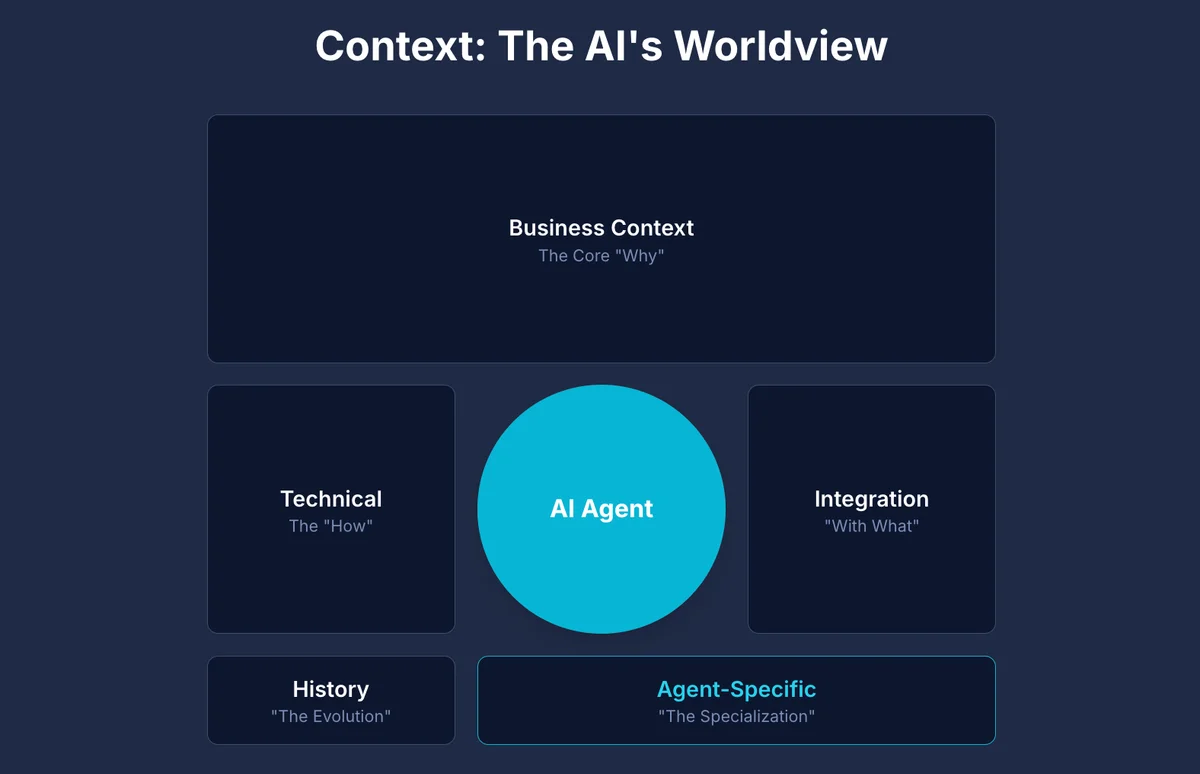

Gemini 3.1 Flash-Lite is Google's most cost-efficient model in the Gemini 3 family. Built on the same architecture as Gemini 3 Pro, it's designed for high-volume, latency-sensitive workloads — classification, translation, content moderation, and real-time chat. It replaces the now-deprecated Gemini 2.0 Flash and 2.0 Flash-Lite.

Gemini 3.1 Flash-Lite

Developer: Google DeepMind

Google's fastest, most cost-efficient Gemini 3 model. Built on Gemini 3 Pro architecture, optimized for high-volume, latency-sensitive workloads at scale.

Specifications

Key Specs at a Glance#

| Spec | Value |

|---|---|

| Model ID | gemini-3.1-flash-lite-preview |

| Context Window | 1,000,000 tokens |

| Max Output | 65,536 tokens |

| Output Speed | 363 tok/s |

| Architecture | Gemini 3 Pro (distilled) |

| Release Date | March 3, 2026 |

| Status | Preview |

Google's Gemini 3 lineup: Gemini 3.1 Pro (flagship, $2.50/$12) → Gemini 3 Flash ($0.50/$3) → Gemini 3.1 Flash-Lite ($0.25/$1.50). Flash-Lite sits at the bottom, but "bottom" here means 1M context and benchmarks that would have been flagship-tier a year ago.

If you're still on Gemini 2.0 Flash or 2.0 Flash-Lite — those are deprecated. Flash-Lite is your migration path.

Pricing: A Speed Play, Not a Budget Play#

Google positions Flash-Lite as "most cost-efficient," and technically that's true within the Gemini 3 family. Zoom out and the picture gets more nuanced.

Flash-Lite vs Gemini 2.5 Flash#

| Metric | Gemini 3.1 Flash-Lite | Gemini 2.5 Flash | Difference |

|---|---|---|---|

| Input/1M | $0.25 | $0.30 | 17% cheaper |

| Output/1M | $1.50 | $2.50 | 40% cheaper |

| Cached Input/1M | $0.025 | $0.03 | 17% cheaper |

| Batch Input/1M | $0.125 | $0.15 | 17% cheaper |

| Batch Output/1M | $0.75 | $1.25 | 40% cheaper |

40% off output is real money at scale. But Gemini 2.5 Flash was already one of the cheapest models on the market. Going from $2.50 to $1.50 per million output tokens saves you $1 per million. On a workload doing 100M output tokens per month, that's $100. Nice, but that's not why you switch models.

You switch for speed. 363 tok/s vs 249 tok/s. A 45% jump.

Flash-Lite vs the Budget Tier (March 2026)#

Flash-Lite against every budget model worth considering right now. All pricing from our LLM pricing tracker, verified March 3, 2026:

Budget LLM API Pricing Comparison (March 2026)

| Model | Context | Input/1M | Output/1M | Cached/1M | Speed | Output $/1M |

|---|---|---|---|---|---|---|

| 1. DeepSeek V3.2 | 64K | $0.28 | $0.42 | $0.028 | Medium | $0.42 |

| 2. Gemini 3.1 Flash-Lite | 1M | $0.25 | $1.50 | $0.025 | 363 tok/s | $1.50 |

| 3. GPT-5 mini | 400K | $0.25 | $2.00 | $0.025 | Medium | $2.00 |

| 4. Gemini 2.5 Flash | 1M | $0.30 | $2.50 | $0.03 | 249 tok/s | $2.50 |

| 5. Claude Haiku 4.5 | 200K | $1.00 | $5.00 | $0.10 | Fast | $5.00 |

Cheapest on pure cost? Still DeepSeek V3.2 at $0.28/$0.42 — but you're stuck with 64K context and no batch API. Flash-Lite's sweet spot is the intersection of cost, speed, and context: cheapest model with 1M context at 363 tok/s. Nothing else in this table does all three.

Claude Haiku 4.5 costs 4x more on input and 3.3x more on output. Unless you specifically need Haiku's quality ceiling, that's a hard premium to justify.

Benchmarks: Where Flash-Lite Actually Wins#

Flash-Lite's benchmarks are surprisingly strong for what you're paying. Not just "good for the money" — actually competitive with models costing 4-10x more.

Reasoning and Science#

| Benchmark | Flash-Lite | Gemini 2.5 Flash | GPT-5 mini | Claude Haiku 4.5 |

|---|---|---|---|---|

| GPQA Diamond | 86.9% | 72.8% | 68.4% | 69.2% |

| MMMU-Pro | 76.8% | 67.1% | 63.8% | 64.5% |

86.9% on GPQA Diamond from a budget model. Gemini 3.1 Pro scores 94.3% on the same benchmark — Flash-Lite gets you 92% of the flagship's reasoning at 10% of the price.

Coding#

LiveCodeBench: 72.0% — this puts Flash-Lite above most budget-tier models on code generation. It won't replace Claude Sonnet 4.6 or Opus 4.6 for complex agentic coding, but for code classification, simple generation, and code review at scale, it's more than adequate.

Arena Elo#

Elo 1432 on the Arena.ai leaderboard — above where Claude 3 Opus sat six months ago, and that was a $15/$75 per million token model. The "vibes" scoring (how natural responses feel to real users) is increasingly what separates models in practice. Flash-Lite holds its own.

Speed: The Real Differentiator#

The numbers:

- Output speed: 363 tok/s (vs 249 tok/s for Gemini 2.5 Flash — 45% faster)

- Time-to-first-token: 2.5x faster than 2.5 Flash

Where does 45% more speed actually matter?

Real-time chat with streaming. 249 tok/s vs 363 tok/s — users notice. Responses feel snappier, the first token shows up faster, less time staring at a spinner.

Voice AI and telecom. In call center AI and voice agents, the gap between a customer finishing their sentence and the AI responding is everything. Customer interrupts, changes topic mid-sentence — your system needs to generate a new response before that silence gets awkward. The 2.5x faster time-to-first-token can mean the difference between a natural conversation and a robotic pause that makes people hang up. In telecom, every 100ms you shave off improves call completion rates.

High-volume classification and routing. Processing thousands of requests per second for content moderation, intent classification, ticket routing — throughput is money. 45% more tokens per second, 45% more requests on the same infrastructure.

Where speed doesn't matter: batch processing, async pipelines, anything where you're already waiting on external APIs. If you're using Batch API (50% discount), you've already opted out of caring about latency.

Should You Switch from Gemini 2.5 Flash?#

Depends on what you're optimizing for.

| Scenario | Recommendation |

|---|---|

| Latency-sensitive applications (chat, voice, streaming) | Yes — the speed gain is significant and the price is lower |

| High-volume classification at scale | Yes — more throughput per dollar |

| Stable production pipeline on 2.5 Flash | Wait — it's still in preview. Let it stabilize |

| Pure cost optimization (batch workloads) | Maybe — the 40% output savings are real, but test quality first |

| Complex reasoning tasks | Test first — benchmarks look great but verify on your specific workload |

Keep in mind: it's still in preview (gemini-3.1-flash-lite-preview). Google hasn't locked in stable pricing or behavior yet. Running a production pipeline that can't tolerate model changes? Wait for GA. Starting fresh or fine with preview-tier volatility? Flash-Lite beats 2.5 Flash as a default today.

If you want a framework for evaluating models on your own workload, we wrote a guide on how to identify the best model for your work.

Best Use Cases for Flash-Lite#

Where Flash-Lite excels:

- High-volume classification and routing — intent detection, content moderation, ticket categorization

- Real-time chat with streaming — chatbots, customer support agents, interactive tools

- Translation pipelines — fast, cheap, good-enough quality at 363 tok/s

- Data extraction — pulling structured data from unstructured text at scale

- Voice AI and call centers — low TTFT keeps conversations natural

- Content moderation — speed matters when you're scanning millions of posts

What Flash-Lite is NOT for:

- Complex multi-step reasoning — use Gemini 3.1 Pro or Claude Sonnet 4.6

- Long-form content generation — quality ceiling matters more than speed here

- Agentic workflows with tool use — Flash-Lite hasn't been tested for multi-step agent reliability

- Tasks where accuracy is non-negotiable — it's a budget model; verify outputs for critical paths

Frequently Asked Questions

Enjoyed this post?

Find out which LLM is cheapest for your use case — I test new models as they launch

No spam, unsubscribe anytime.